Rendering images in a way that allows gradients to be computed with respect to scene parameters

Propagate gradients of image pixels to scene parameters

Use cases

- Pair with optimisation algorithms to solve inverse rendering problems

- Reflectance/lighting estimation (Inverse Path Tracing for Joint Material and Lighting Estimation, Azinovic)

- 3D reconstruction from one/multiple input pictures (Large steps in inverse rendering of geometry, Nicolet)

- Propagate gradients into a network to learn the scene’s neural representation: learn a map from position/viewpoint to colour

- Novel view synthesis (NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis, Mildenhall)

- Scene relighting (Neural Rendering Framework for Free-Viewpoint Relighting, Chen)

- Scene editing (IntrinsicNeRF: Learning Intrinsic Neural Radiance Fields for Editable Novel View Synthesis, Ye)

Physics-based differentiable rendering

Differentiable rendering techniques corresponding to global illumination algorithms

Main challenge

In order to backpropagate gradients from pixel colour to scene parameters, we need to be able to differentiate the function that gave these pixel colours with respect to the different scene parameters. We want to be able to compute:

[math]\frac{d}{dx} \int_{a(x)}^{b(x)} f(t, x) dt[/math]

- [math]x[/math]: scene parameter (vertex position, material parameter, …)

- [math]t[/math]: light path (we integrate over the space of all possible light transport paths)

- [math]f[/math]: light contribution of a path [math]t[/math] for the current scene configuration defined by [math]x[/math]

- [math]a, b[/math]: boundaries of the set of all valid light paths

The complexity resides in the fact that [math]a[/math] and [math]b[/math] depend on [math]x[/math]: as you change the scene, the set of valid light paths also changes (some paths become invalid because blocked, some appear). So the Leibniz Integral Rule gives us:

[math]\frac{d}{dx} \int_{a(x)}^{b(x)} f(t, x) dt = f(b(x), x) \cdot b'(x) – f(a(x), x) \cdot a'(x) + \int_{a(x)}^{b(x)} \frac{\partial f}{\partial x} dt [/math]

Introducing an additional boundary term [math]f(b(x), x) \cdot b'(x) – f(a(x), x) \cdot a'(x)[/math]

This boundary terms correspond to scenarios where tiny changes in scene parameters cause discrete changes in what light paths exist, and these are driven by visibility function. Visibility is binary, and therefore not continuous -> we stumble upon a discontinuous phenomenon in a continuous optimisation problem, and we can’t apply automatic differentiation which assumes continuous behaviour.

Current Solutions

Boundary sampling methods

Explicitly compute the boundary term by importance sampling paths in the boundary sample space

Solution from “Path-space differentiable rendering”, Zhang et al.

Fundamental rendering equation can be expressed in path-space form (see Veach 1997):

[math]I = \int_{\Omega} f(x) d\mu(x) [/math]

- [math]I[/math]: radiometric measurement (pixel intensity)

- [math]\Omega[/math]: path space (set of all possible light paths)

- [math]x=(x_0, …, x_N)[/math]: one such light path

- [math]\mu(x)[/math]: area-product measure, representing sampling over surface interactions

- [math]f(x)[/math]: path contribution function

Expresses the final pixel colour as an integral over all possible light paths -> foundation of bidirectional path tracing -> differentiate this path integral

Goal

Compute the derivative of the above wrt to an arbitrary scene parameter [math]\pi[/math]:

[math]\frac{\partial I}{\partial \pi} = \frac{\partial}{\partial \pi} \int_{\Omega(\pi)} f(x, \pi) d\mu(x, \pi) [/math]

Main idea

Split the derivative into two components

[math]\frac{\partial I}{\partial \pi} = \int_{\Omega(\pi)}f’ d\mu + \int_{\partial \Omega(pi)} \Delta f V_{\partial \Omega} dl[/math]

- [math]f'[/math]: normal scene derivative of the path contribution

- [math]\partial \Omega(\pi)[/math]: boundary of path space (paths near shadow edges)

- [math]\Delta f[/math]: jump in path contribution across a discontinuity

- [math]V_{\partial \Omega}[/math]: normal velocity of the boundary curve (how fast the visibility changes)

- [math]dl[/math]: integration over the boundary paths

- Interior integral (left): smooth changes within the path space

- Boundary integral (right): handles discontinuities

-> You can now derive tailored Monte Carlo estimators for each part: they are still high-dimensional integrals that can’t be computed exactly, we can however sample light paths to we use Monte Carlo integration to estimate them:

[math]\int f(x) dx \approx \frac{1}{N} \sum_{i=1}^{N} \frac{f(x^{(i)})}{p(x^{(i)})}[/math]

- Interior Monte Carlo estimator: classic approach, sample paths through path tracing. For each path [math]x[/math], compute [math]f'(x)[/math] which measures how much that path’s contribution changes with [math]\pi[/math]

- Boundary estimator: more complex, requires special sampling (ex: tracing toward visibility edges) because random sampling will almost never hit them: deliberately generate paths that land on the boundary

- Visibility edge sampling: explicitly sample configurations where visibility flips from 1 to 0, trace rays that go towards silhouette edges

- Inverse path reparameterisation: reparameterise the path space so that boundary paths lie on a domain with non-zero measures

- Finite difference aided sampling: sample normal light paths, perturb the scene parameter ([math]\pm \epsilon[/math]) and see if the contribution changes abruptly to know if that path is near a boundary. If yes, use finite differences to estimate the jump.

Reparameterisation methods

Reparameterise the integral domain by tracing auxiliary rays such that the geometric discontinuities remain static when scene parameters change

Solution of “Reparameterising discontinuous integrands for differentiable rendering”, Loubet et al.

Look into Warped-Area Reparameterization of Differential Path Integrals, Xu et al. and “Reparameterising discontinuous integrands for differentiable rendering”, Loubet et al.

Neural Radiance Fields (NeRFs)

Synthesizing novel views of complex 3D scenes based on a sparse set of 2D images

Core Idea

Use a neural network to model a continuous volumetric scene function:

[math](x, y, z), (\theta, \phi) \longrightarrow (r, g, b), \sigma[/math]

- [math](x, y, z)[/math]: 3D coordinates of a point in the scene

- [math](\theta, \phi)[/math]: viewing direction, direction of the camera ray that hits the point

- [math](r, g, b)[/math]: colour of the point as seen from the direction

- [math]\sigma[/math]: volume density: how much light is absorbed at that point

Use the output for volumetric rendering:

Training

- Input Data

- Set of posed RGB images of a scene

- Corresponding camera intrinsics (focal length, …) and extrinsics (position, orientation)

- Ray sampling

- For each training iteration

- Random batch of pixel selected in input image

- For each pixel

- Compute corresponding camera ray (originates from the camera and passes through that pixel into the scene)

- Sample multiple 3D points along the ray between near and far bounds: they are the coordinates [math]x_i[/math] that are fed into the NeRF network

- For each training iteration

- Neural Network Inference

- For each sampled point [math]x_i[/math]

- Apply positional encoding to [math]x_i[/math] and the ray direction [math]d[/math]

- Lift input into higher-frequency space using sinusoids

- Encoded inputs are passed through the MLP and get outputs [math](c_i, \sigma_i)[/math]

- Apply positional encoding to [math]x_i[/math] and the ray direction [math]d[/math]

- For each sampled point [math]x_i[/math]

- Volume Rendering

- Use outputs [math](c_i, \sigma_i)[/math] to numerically integrate along each ray to compute the final color [math]C[/math] of the pixel using volume rendering equation

- Loss function

- Compare predicted [math]C[/math] to ground truth pixel colour through a standard MSE

- Optimisation

- Backpropagate the loss through the network

Architecture

Fully connected feedforward network (MLP) with positional encoding applied to inputs

Look more into it

Strengths/Limitations

Strengths

- High quality photorealistic novel views, outperforms traditional 3D reconstruction

- Continuous scene representation

- Compact scene representation (just neural network weights)

- No explicit 3D model or mesh needed

- Can learn view dependent effects (reflection, specular highlights) since it conditions on viewing direction

Limitations

- Slow training: hours to days, evaluates for 100ks 3D points for each iteration

- Slow inference: minutes, every pixel requires querying and integrating along a ray

- Static scenes only: struggles with motion, deforming objects, dynamic lighting

- Needs accurate camera poses: precises camera extrinsics

- Scene specific model: each model captures one scene

Variants and extensions

Instant-NGP

- Goal: make NeRF trainable and renderable in real-time

- Main idea

- Replace MLP with a multi-resolution hash grid for scene encoding

- Use tiny neural networks and CUDA optimised code

- Improvement: trains in seconds to minutes instead of days

Generalisation across scenes: PixelNeRF / MVSNeRF / Generalizable NeRFs

- Goal: train a single model that works on new scenes at test time

- Main idea

- Use image features as conditioning inputs

- Learn to predict radiance fields without retraining

- Improvement: can synthesise novel views of unseen scenes with just a few images

Large-scale scenes: Mega-NeRF / Block-NeRF / Urban-NeRF

- Goal: NeRFs for huge real-world environments

- Main idea

- Use scene partitioning

- Compress and blend local NeRFs across large areas

- Handle variability in lighting and weather

Dynamic scenes: D-NeRF / Nerfies / HyperNeRF / NSFF

- Goal: model non-static scenes

- Main idea

- Add time variable to network input

- Learn deformation field over time

- Use optical flow or scene flow constraints

Trends

- NeRFs as graphics engines

- Text to NeRF, using language models to generate 3D scenes

- NeRF + SLAM for real-time tracking and mapping

3D Gaussian Splatting (3DGS)

TODO

- uses a discrete set of 3D Gaussians as base primitives

Below this is draft, things to sort out and to look into more

Key Challenges

- Non-differentiability of visibility: changes in geometry cause discrete changes in geometry (occlusion) -> non continuous function -> not differentiable

- High computational cost: rendering with gradients is expensive

- Ill-posed inverse problems: multiple combinations of scene parameters can produce the same image

- Complexity of rendering equation: full differentiability of global illumination is difficult due to recursive nature of light transport

- Gradient noise: some differentiable renderers suffer from that

Selected work

State of research

Differentiable renderers

- Soft Rasterizer (2019): smooth approximations to handle visibility

- Neural mesh renderer (2018): approximate rasterisation gradient

- Mitsuba 2 (2020): physical renderer with automatic differentiation

- redner (2019): Monte Carlo differentiable renderer

- Kaolin/PyTorch 3D: toolkits with differentiable rasterizers

Neural approaches

- NeRF and variants: implicit scene representations using volumetric rendering

- Volumetric differentiable renderers: user differentiable ray marching over density and color fields

- Inverse rendering networks: learn to infer geometry, lighting and materials from single/multiple images

Trends

- Combine NeRFs with differentiable rendering (NeRF++)

- Increased focus on real-time differentiable rendering (rasterisation with approximated gradients)

To look into / Sources

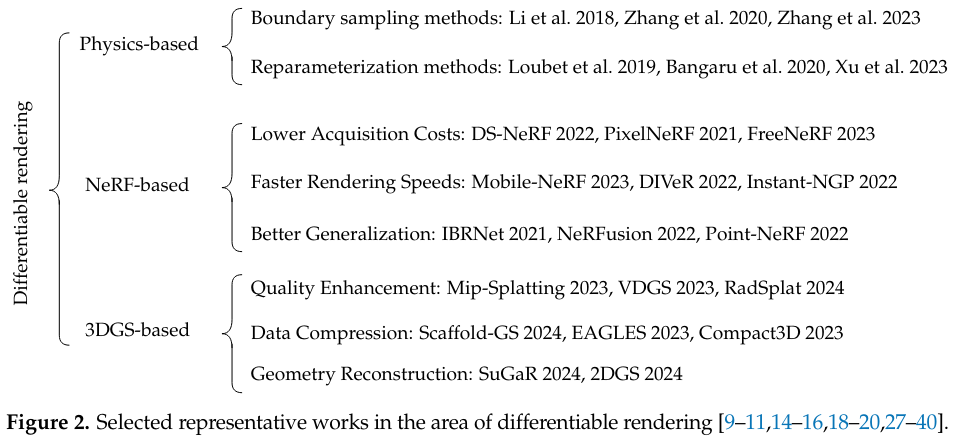

- A Brief Review on Differentiable Rendering: Recent Advances and Challenges, Ruicheng Gao and Yue Qi

- Differentiable Rendering: A Survey, Hiroharu Kato, Deniz Beker, Mihai Morariu, Takahiro Ando, Toru Matsuoka, Wadim Kehl and Adrien Gaidon

- A Survey on Physics-based Differentiable Rendering, Y. Zeng1, G. Cai2 and S. Zhao2